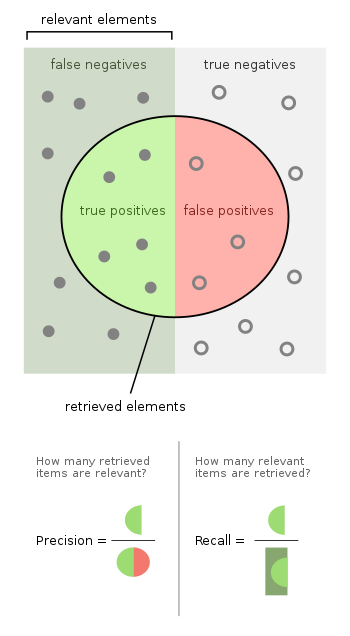

class: center, middle, inverse, title-slide # Statistics for International Relations Research II ## Models for Binary Outcomes ### <large>James Hollway</large> --- class: center, middle .pull-1[.circleon[]] .pull-1[.circleon[]] .pull-1[.circleon[]] --- class: center, middle .pull-1[.circleon[]] .pull-1[.circleon[]] .pull-1[.circleon[]] ??? This week we're going to talk about models for binary data. We'll talk about why OLS is poor (or is it?) for binary data, and what the alternatives are, paying special attention to _the logit_. Next we're going to discuss the interpretation of logit models. We'll talk about how one can talk about log-odds and predicted probabilities, marginal effects and odds ratios. Lastly, we'll talk about goodness of fit measures for logits, which is a bit of an applied version of the bit at the end of last time that we didn't have time for... We'll talk about likelihood ratios and Wald tests, as well as ROC curves and cross-validation. --- class: center, middle # Binary Models .pull-1[.circleon[]] .pull-1[.circleoff[]] .pull-1[.circleoff[]] --- ## Binary response variables .pull-left[ Some response variables are intrinsically binary because they exist or they don’t: - Going to war or not - Whether a country ratifies an international agreement or not ] .pull-right[ Others effectively binary, represented as binary out of convenience, or to concentrate on polar choices: - Whether a country is a democracy or not (sort of...) - Voting for a Republican or a Democrat ] -- .pull-left[  ] .pull-right[ Quick rant: Dichotomizing continuous variables replaces information with arbitrary assumptions. Even worse when done with multiple variables. If we .red[median split] two variables (e.g. $ and shoe size), both will have identical distributions... `\(\mu = .5, \sigma = .252\)`. Moreover, impossible to “get back to” underlying mean or variance on basis of 0/1 indicators. ] -- So binary response variables common and flexible, but we need to be careful. So, how do we model them? ??? This is why _measurement_ is really one of the most important parts of all statistics... nay, research! --- ## Today's data .pull-left[  <div class="Rtable1"><table class="Rtable1"> <thead> <tr> <th class='rowlabel firstrow lastrow'></th> <th class='firstrow lastrow'><span class='stratlabel'>Democrat<br><span class='stratn'>(N=51)</span></span></th> <th class='firstrow lastrow'><span class='stratlabel'>Republican<br><span class='stratn'>(N=49)</span></span></th> <th class='firstrow lastrow'><span class='stratlabel'>Overall<br><span class='stratn'>(N=100)</span></span></th> </tr> </thead> <tbody> <tr> <td class='rowlabel firstrow'><span class='varlabel'>War</span></td> <td class='firstrow'></td> <td class='firstrow'></td> <td class='firstrow'></td> </tr> <tr> <td class='rowlabel'>Mean (SD)</td> <td>0.569 (0.500)</td> <td>0.980 (0.143)</td> <td>0.770 (0.423)</td> </tr> <tr> <td class='rowlabel lastrow'>Median [Min, Max]</td> <td class='lastrow'>1.00 [0, 1.00]</td> <td class='lastrow'>1.00 [0, 1.00]</td> <td class='lastrow'>1.00 [0, 1.00]</td> </tr> <tr> <td class='rowlabel firstrow'><span class='varlabel'>VotedBush</span></td> <td class='firstrow'></td> <td class='firstrow'></td> <td class='firstrow'></td> </tr> <tr> <td class='rowlabel'>Mean (SD)</td> <td>51.0 (7.46)</td> <td>58.6 (7.98)</td> <td>54.8 (8.58)</td> </tr> <tr> <td class='rowlabel lastrow'>Median [Min, Max]</td> <td class='lastrow'>51.2 [39.0, 67.0]</td> <td class='lastrow'>57.6 [39.0, 73.7]</td> <td class='lastrow'>53.8 [39.0, 73.7]</td> </tr> </tbody> </table> </div> ] .pull-right[ .panelset[ .panel[ .panel-name[Sen Code] ```r library(ggparliament) data("election_data") us_sen <- election_data %>% filter(country == "USA" & year == 2016 & house == "Senate") us_sen <- bind_rows(us_sen, us_sen[1:2,]) %>% mutate(year = 2002, government = c(1,1,0,0,0), seats = c(48,29,1,1,21)) us_sen <- parliament_data( election_data = us_sen, type = "semicircle", parl_rows = 4, party_seats = us_sen$seats) senate <- ggplot(us_sen, aes(x, y, colour = party_long)) + geom_parliament_seats() + geom_highlight_government(government == 1) + theme_ggparliament(legend = FALSE) + labs(colour = NULL, title = "United States Senate", subtitle = "Black circles highlight those who voted for war.") + scale_colour_manual(values = us_sen$colour, limits = us_sen$party_long) senate ``` ] .panel[ .panel-name[Sen Plot]  ] .panel[ .panel-name[Mosaic Code] ```r library(ggplot2) # ggplot(iraqVote, aes(x = factor(War))) + geom_bar() + xlab("Voted for War") iraqVote$WarVote <- ifelse(iraqVote$War==1, "Aye", "Nay") library(ggmosaic) ggplot(data = iraqVote) + geom_mosaic(aes(x = product(WarVote, Republican), fill=WarVote)) + ylab("Vote") + xlab("Party") + theme_minimal() ``` ] .panel[ .panel-name[Mosaic Plot]  ] ] <!--  --> ] ??? So here we have some data on senators voting to authorise the use of military force in Iraq. --- ## The Linear Probability Model .pull-left-1[ <img src="STAT_L5_Binary_files/figure-html/xyc-1.png" width="504" /> ] .pull-right-2[ One option, called the .red[Linear Probability Model] (LPM), is just to do a regular OLS regression of `\(Y\)` on `\(X\)`, but with a binary response variable. ] -- .pull-right-2[ We model the expected value of `\(Y\)` as linear function of `\(X\)`, \\( E(Y) = \beta X \\), which in the binary case is: $$ E(Y) = \Pr(Y = 1) = 1[\Pr(Y = 1)] + 0[\Pr(Y = 0)] $$ So we model: $$ \Pr(Y_i = 1) = \beta X_i $$ The figure shows this result (for one continuous independent variable). ] -- .pull-left[ Can you spot any potential problems with this?] --- ## What's wrong with this? .pull-left-1[ <img src="STAT_L5_Binary_files/figure-html/xyc2-1.png" width="504" /> ] .pull-right-2[ __Nonsensical predictions__ (i.e., probabilities that are < 0 or > 1): If aye=1 and nay=0, what is 0.6, 0.9, or 1.2 aye? ] -- .pull-right-2[ __Heteroskedastic variance__ by construction: Since `\(Y\)` is binomial, \\(Var(Y) = E(Y)[1 - E(Y)] = X_i\beta(1 - X_i\beta)\\), error term can only take on two values: either \\( 1 - X_i\beta \\) (distance from prediction to 1) or \\(-X_i\beta\\) (distance from prediction to 0). So errors _never_ normally distributed, implying that traditional _t_-tests for individual significance and _F_-tests for overall significance are invalid. ] -- Wrong __functional form__: We’d usually expect variables that impact probabilities to exhibit diminishing returns: as their value increases, the impact on `\(Y\)` would decrease. We want diminishing returns because 100% certainty something will happen _should_ be rare. LPM doesn’t allow this; is constant for all values of `\(X\)` and `\(Y\)`. --- ## So what? Note that (with the possible exception of the last one) none of these problems go to bias; OLS point estimates \\( \hat\beta \\) remain unbiased estimates of the true parameter values \\( \beta \\). -- That’s why you still LPM used sometimes in econometrics or experimental studies because: - **Interpretability**: OLS estimates intuitive to understand and interpret; output from logits/probits less so. - **Similar model fit**: If probabilities you are modelling are moderate (say mostly between .2 and .8), then linear and logistic models (next) fit about equally well. -- .pull-left[ Still, if you submit an LPM to a polisci journal these days, you’ll get __desk-rejected__. The rationale is that we have no real excuse not to use more appropriate models, which are very common and well understood by now. So, what’s the alternative? ] .pull-right[  ] --- ## Logistic regression .red[Logistic regression] is a .red[generalized linear model] (GLM) applied to categorical outcomes using a .red[logit link function]. Because it represents a way to relate discrete or continuous variables to a discrete outcome, it forms the basis for many classification problems, as we shall see. -- ### What’s the difference between the logit and the logistic? Recall that in GLMs, instead of using Y as the outcome, \\(\mu_Y\\), we use a .red[link function] of the mean of Y, \\(f(\mu_Y)\\). The .red[logit] is a link function/transformation of a parameter \\(\pi\\). We transform probability, \\(\pi\\), into odds, \\(\frac{\pi}{1-\pi}\\), and then take the log, \\(\log(\frac{\pi}{1-\pi})\\). This link function allows us to linearly relate `\(X\)` to `\(Y\)`. The .red[logistic] is the inverse of the logit, \\(\frac{\exp(x)}{1 + \exp(X)}\\). We get it by exponentialising out of the log-odds framework. We use the logit link function to linearly relate the RHS to the LHS, \\(\log(\frac{\pi}{1-\pi}) = X\beta\\), and the logistic inverse of that to talk about probabilities, \\(\pi = \frac{\exp(x)}{1 + \exp(X)}\\). -- Huh? I’ll walk you through in four steps... --- ## Four steps .pull-left[ <img src="STAT_L5_Binary_files/figure-html/probs-1.png" width="504" /> ] .pull-right[ __First__, binary outcomes are binary, 0 or 1, and we want something continuous so that we can relate a continuous left-hand side to a continuous right-hand side. The solution is to reconceptualise the outcome or response not as the raw value but the _probability_ of the outcome being one or the other. This gives us \\([0,1]\\). ] -- .pull-left[ <img src="STAT_L5_Binary_files/figure-html/oddsdist-1.png" width="504" /> ] .pull-right[ __Second__, probabilities are bounded between 0 and 1, and we want something unbounded. The solution is to get the _odds_ of the probability of something happening: \\(\frac{p}{1-p}\\). We’ll talk a little more about odds later. Since probability can never be less than 0 or greater than 1, this gives us \\([0,+\infty]\\). ] --- .pull-left[ <img src="STAT_L5_Binary_files/figure-html/logitdist-1.png" width="504" /> ] .pull-right[ __Third__, odds are still lower bounded at zero and are badly skewed. The solution is to take the log of the odds, .red[log-odds] = `\(\log(\frac{p}{1-p})\)`, commonly called the .red[logit]. This gives us `\([-\infty,+\infty]\)`, which is finally the range that the linear RHS could take. ] -- .pull-left[ <img src="STAT_L5_Binary_files/figure-html/logistdist-1.png" width="504" /> ] .pull-right[ __Lastly__, we take the inverse of the logit to give the .red[logistic] curve. This offers a nice sigmoidal curve (i.e. S-shaped). Log-odds used for a while, e.g. by Charles Sanders Pierce in the late 19th century. Logit transformation and logistic regression developed into roughly current form by Sir David Cox in 1960’s. Generalized linear models with various link functions came later. ] ??? So we need a function of the probability that does two things: (1) converts a probability into a value that runs from -∞ to ∞ and (2) has a linear relationship with the Xs. --- ## But what does this mean? Three ways of conceiving of this transformation: 1. As an expression of odds of observing (I personally find this most intuitive) 1. As a continuous/latent/unobservable \\(Y^\*\\) that relates somehow to the observed values of Y (\\(Y^\*\\) is not part of your data, it is part of the model). I.e. assume that `\(Y=1\)` if the signal of \\(Y^\*\\) is above some threshold, and otherwise `\(Y=0\)`. <!-- Furthermore, assume, without loss of generality, that this threshold is zero. --> 1. As an expression of choice based on some utility function -- I'll elaborate on this a bit more next time... --- ## Logistic PDF .pull-left[ If we assume that the error term follows a standard logistic distribution, we get a logit model... The standard logistic probability density is: $$ \Pr(u) = \lambda(u) = \frac{\exp(u)}{[1+\exp(u)]^2} $$ That’s the probability density function (PDF), the term for the probability that _u_ takes on some specific value. The standard logistic has the following characteristics: 1. It is “bell-shaped” and symmetrical around zero - I.e. \\(\lambda(u) = 1 - \lambda(-u) \text{ and } 1 - \Lambda(-u)\\). 1. The PDF has a variance equal to \\( \frac{\pi^2}{3} \approx 3.29 \\) - I.e. fatter “tails” than the standard normal distribution ] .pull-right[ ```r ggplot(data.frame(x = c(-4, 4)), aes(x = x)) + stat_function(fun = dnorm, colour = "blue") + stat_function(fun = dlogis, colour = "red") + theme_minimal() ``` <img src="STAT_L5_Binary_files/figure-html/pdfcompare-1.png" width="504" /> ] ??? There's often some confusion in terminology: Binary logistic regression is an instance of a generalized linear model (GLM) with the logit link function. The logit function is the inverse of the logistic function, and the logistic function is sometimes called the sigmoid function or the s-curve. Logistic regression estimation obeys the maximum entropy principle, and thus logistic regression is sometimes called “maximum entropy modeling”, and the resulting classifier the “maximum entropy classifier”. --- ## Logistic CDF .pull-left[ Once we assume that error is distributed according to the standard logistic, we can say something about the probability that `\(Y = 1\)`: $$ \Pr(Y_i = 1) = Y_i^* > 0 = \Pr(u_i ≥ -X_i\beta) $$ If we want to know the cumulative probability that a standard-logistic variable is less than or equal to some value, we consider the CDF of the logit: $$ \Lambda(u) = \int \lambda(u)du = \frac{\exp(u)}{1 + \exp(u)} $$ As an example, consider some values for _u_: - _u_ = 0 then \\(\Lambda(u) = 0.5\\) - _u_ = 1 then \\(\Lambda(u) = 0.73\\) - _u_ = 2 then \\(\Lambda(u) = 0.88\\) - _u_ = -1 then \\(\Lambda(u) = 0.27\\) - _u_ = -2 then \\(\Lambda(u) = 0.12\\) ] .pull-right[ ```r ggplot(data.frame(x = c(-4, 4)), aes(x = x)) + stat_function(fun = pnorm, colour = "blue") + stat_function(fun = plogis, colour = "red") + theme_minimal() ``` <img src="STAT_L5_Binary_files/figure-html/cdfcompare-1.png" width="504" /> Notice the sigmoidal curve and the thick tails! ] --- ## Now we can MLE like last time! .pull-left-1[ We can linearly relate the log-odds of the occurrence of some binary event of interest to a set of covariates: Through fancy algebra, we can determine the probability for the logit model: Turn it into a likelihood function: Add up all (N) observations to get overall likelihood (note the multiplication): Lastly, we take the log (again) to avoid teensy values: ] .pull-right-2[ $$ \log\Bigl(\frac{\Pr(Y_i = 1)}{1-\Pr(Y_i = 1)}\Bigr) = X_i\beta $$ $$ \Pr(Y_i = 1) = \frac{\exp(X_i\beta)}{1 + \exp(X_i\beta)} $$ `$$L_i = \Bigl(\frac{\exp(X_i\beta)}{1 + \exp(X_i\beta)}\Bigr)^{Y_i} \Bigl[1 - \frac{\exp(X_i\beta)}{1 + \exp(X_i\beta)}\Bigr]^{1-Yi}$$` `$$L = \prod_i^N \Bigl(\frac{\exp(X_i\beta)}{1 + \exp(X_i\beta)}\Bigr)^{Y_i} \Bigl[1 - \frac{\exp(X_i\beta)}{1 + \exp(X_i\beta)}\Bigr]^{1-Yi}$$` `$$\log L = \sum_i^N \log\Bigl(\frac{\exp(X_i\beta)}{1 + \exp(X_i\beta)}\Bigr) + (1 - Y_i) \log\Bigl[1 - \frac{\exp(X_i\beta)}{1 + \exp(X_i\beta)}\Bigr]$$` ] .pull-down[ This yields a .red[standard binary logit model], and then all we need to do is maximize this log-likelihood with respect to \\(\beta\\)s to obtain our MLEs! ] ??? Note that this slide is really only for enthusiasts. As applied researchers, you will never need to show this working. But I like to include these slides sometimes to show that this is not all just a black box; that it is really just a set of (by now) standard transformations to deal with particular properties of certain types of data. If you _are_ interested in going down this rabbit hole though, please make an appointment during my office hours! --- ## Other Binary Response Models ### Probit (Probability Logit, though perhaps better called the "normit") Main difference theoretical. It assumes errors in the underlying latent equation distributed _normally_. That is, - .red[logit] uses a logistic link function \\(f(\mu_Y) = \log(\frac{\pi}{1-\pi})\\) - .red[probit] uses an inverse normal distribution link function \\(f(\mu_Y) = \Phi^{-1}(P)\\) Some (e.g. some economists) argue assuming normal errors better justified because of the central limit theorem, etc. - Since standard logistic distribution has a larger variance, will also yield larger estimated \\(\hat\beta\\) (and s.e.). - As a result, logit estimates are usually roughly 1.8 times the size of probit ones (which is \\(\approx \sqrt{3.29}\\) - recall that the variance of the standard normal is 1, while that for the standard logistic is 3.29). BUT, because the CDF of the standard normal has no closed form solution, can’t figure out integral through algebra/calculus, and instead have to approximate it numerically (hence _z_-tables). And THOUGH we can approximate it very accurately, we can’t simply figure out probit probabilities using a garden-variety hand calculator. ??? In practice, you could use logit or probit (just slightly different log-odds), but there are some considerations: - Disciplinary use: Logits are more commonly used in poli sci, probits more in economics. - Interpretation: logit coefficients can be back transformed into odds ratios, a somewhat intuitive way to interpret the effect. Not the case with probit coefficients. - Coefficients for probit models can be interpreted as the difference in Z-score associated with each one-unit difference in the predictor variable. That’s not very intuitive. - Another way to interpret these coefficients is to use the model to calculate predicted probabilities at different values of X. - Assumptions about data and observations: Probit models can be generalized to account for non-constant error variances in more advanced econometric settings (known as heteroskedastic probit models) - The probit is used because it is easier to calculate if values are not independent over time. - If the binary choice is independent from other observations, then there is no difference between logit and probit. - Variable form: Logit is used if you want to calculate estimates for categorical data. --- ### Clog-log (Complementary Log-Log Model) - Whereas the log-log model approaches 0 quickly but 1 slowly, its inverse or 'complement', the clog-log, approaches 0 slowly but 1 quickly - It is therefore not symmetrical around 0.5 like the logit and probit are <!-- - The cloglog is called for if you believe that the probability of success rises slowly from zero, but then tapers off more quickly as it approaches one. --> - We’ll come back to the cloglog model a bit later on, when we discuss models for event counts... ### Scobit (Skewed-Logit) - Used where individuals with any initial probability of choosing either of two alternatives (0.5) are most sensitive to changes in independent variables. ### Tobit (Censored Regression Model) - Designed to estimate linear relationships between variables when there is either left- or right-censoring in the dependent variable. ??? I feel like tobits had their hey-day last decade, with a set of more high-profile papers. --- class: center, middle # Interpretation .pull-1[.circleoff[]] .pull-1[.circleon[]] .pull-1[.circleoff[]] ??? Ok, now that we've motivated and explained logistic regression, let's now talk about how to estimate (briefly) and interpret logit models. --- ## Estimation .pull-left[ Let's say we have a pretty straightforward model for which senators vote for authorizing use of force in Iraq: $$ \Pr(\text{War}_i = 1) = f\bigl(\beta_0 + \beta_1(\text{Republican}) + \beta_2(\text{VotedBush}) + u_i\bigr) $$ That is, whether a senator will vote for war depends on some function of - their general propensity to vote for war (\\(\beta_0\\)), - whether they are Republican or not (\\(\beta_1\\), because President Bush was Republican), and - what percentage of their constituents voted for Bush over Gore in the last election (\\(\beta_2\\), the rationale being that this reveals how precarious their seat is). We can estimate this model using (e.g.) the `glm()` function in R. ] .pull-right[ ```r fullmodel <- glm(War ~ VotedBush + Republican, data = iraqVote, family = binomial(link="logit")) tab_model(fullmodel, transform = NULL) ``` <table style="border-collapse:collapse; border:none;"> <tr> <th style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; text-align:left; "> </th> <th colspan="3" style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; ">War</th> </tr> <tr> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; text-align:left; ">Predictors</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">Log-Odds</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">CI</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">p</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">(Intercept)</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">-5.44</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">-10.27 – -1.26</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.017</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">VotedBush</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.11</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.03 – 0.21</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.012</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">Republican [Republican]</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">3.02</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">1.30 – 5.95</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.005</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm; border-top:1px solid;">Observations</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left; border-top:1px solid;" colspan="3">100</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm;">R<sup>2</sup> Tjur</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left;" colspan="3">0.315</td> </tr> </table> ] ??? We will encounter `glm()` again, as it refers to generalized linear models, which encompasses many other models too. The formula specification should look pretty familiar by now. You could use interactions or any other transformations you might want here. Note how I have specified that we will use a logit link function over e.g. a probit for the way in which we're expecting the errors to be distributed. Note also that I have told `sjPlot` _not_ to transform the coefficients... this will become clear in a couple of slides... --- ## (No Direct) Interpretation .pull-left[ On the right are the parameter estimates. The estimates from logistic regression characterize the relationship between the predictor and response variable on a .red[log-odds] scale. For example, this model suggests that for every one unit increase in VotedBush, the _log-odds_ of the senator voting for war increases by 0.11. Er, what? What the hell does that mean? Turns out there is no real intuitive way to interpret log-odds, but we have a few options to help us get the most out of this... ] .pull-right[ ```r fullmodel <- glm(War ~ VotedBush + Republican, data=iraqVote, family=binomial(link="logit")) tab_model(fullmodel, transform = NULL) ``` <table style="border-collapse:collapse; border:none;"> <tr> <th style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; text-align:left; "> </th> <th colspan="3" style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; ">War</th> </tr> <tr> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; text-align:left; ">Predictors</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">Log-Odds</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">CI</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">p</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">(Intercept)</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">-5.44</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">-10.27 – -1.26</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.017</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">VotedBush</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.11</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.03 – 0.21</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.012</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">Republican [Republican]</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">3.02</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">1.30 – 5.95</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.005</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm; border-top:1px solid;">Observations</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left; border-top:1px solid;" colspan="3">100</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm;">R<sup>2</sup> Tjur</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left;" colspan="3">0.315</td> </tr> </table> ] --- ## “Sign-ificance” .pull-left[ <table style="border-collapse:collapse; border:none;"> <tr> <th style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; text-align:left; "> </th> <th colspan="3" style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; ">War</th> </tr> <tr> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; text-align:left; ">Predictors</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">Log-Odds</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">CI</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">p</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">(Intercept)</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">-5.44</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">-10.27 – -1.26</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.017</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">VotedBush</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.11</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.03 – 0.21</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.012</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">Republican [Republican]</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">3.02</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">1.30 – 5.95</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.005</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm; border-top:1px solid;">Observations</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left; border-top:1px solid;" colspan="3">100</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm;">R<sup>2</sup> Tjur</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left;" colspan="3">0.315</td> </tr> </table> ] .pull-right[ One option for interpretation is what I call “sign-ificance”: 1. See whether (and to what extent) \\(\beta\\) statistically differentiable from zero 1. See whether the sign of \\(\beta\\) is: - +: increases in `\(X\)` increases `\(\Pr(Y = 1)\)` - -: increases in `\(X\)` decreases `\(\Pr(Y = 1)\)` Together this is sufficient for hypothesis-testing: it tells us whether there is a “signal” for a pattern in the data that goes in the same direction as hypothesised and unlikely to appear just by chance. ] -- Therefore common to see articles using this approach where authors only focused on hypothesis testing, and trying to avoid problems with using odds-ratios (and log-odds) to compare effect sizes within and across models (see below). -- Not the most useful way to present results in a way that might help policy-makers though, since no direct, intuitive way to interpret the log-odds coefficients substantively and among each other. --- ## Odds Ratios .pull-left[ Another approach is to transform the logit’s log-odds coefficients into something more intuitive to support substantive interpretation. Recall from Stats I that odds are a different way of thinking about probability: - If `\(Pr(Y=1)=0.50\)`, then odds 1 to 1 - If `\(Pr(Y=1)=0.75\)`, odds 3 to 1, etc. - Odds range from 0 to \\(\infty\\) (but never negative). The .red[odds ratio] is the change in the odds of Pr(Y=1) associated with a one unit change in our covariate. Odds much more intuitive than log-odds! Would you prefer to bet on a horse winning at the track based on log-odds of -1.95 or an odds ratio of 7 to 1? ] .pull-right[  ] ??? For more on probability and odds see [this](https://stats.seandolinar.com/statistics-probability-vs-odds/). --- background-image: url(https://media.giphy.com/media/tALnlXYyyDJFS/giphy.gif) background-size: contain --- .pull-left-1[ ### Obtaining odds ratios Can obtain odds ratios by simply exponentiating logistic regression coefficients: `$$\text{Odds Ratio} = \exp(\hat\beta_k\delta)$$` Quick Guide: - OR>1: \\(\Pr(Y=1) > \Pr(Y=0)\\) - OR<1: \\(\Pr(Y=1) < \Pr(Y=0)\\) - OR=1: \\(\Pr(Y=1) = \Pr(Y=0)\\) Can then also translate into percentage change in odds: `$$\text{Percentage Change} = 100[\exp(\hat\beta_k\delta) - 1]$$` ] .pull-right-2[ ```r # sjPlot reports OR by default tab_model(fullmodel) ``` <table style="border-collapse:collapse; border:none;"> <tr> <th style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; text-align:left; "> </th> <th colspan="3" style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; ">War</th> </tr> <tr> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; text-align:left; ">Predictors</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">Odds Ratios</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">CI</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">p</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">(Intercept)</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.00</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.00 – 0.28</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.017</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">VotedBush</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">1.12</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">1.03 – 1.23</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.012</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">Republican [Republican]</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">20.47</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">3.69 – 384.20</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.005</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm; border-top:1px solid;">Observations</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left; border-top:1px solid;" colspan="3">100</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm;">R<sup>2</sup> Tjur</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left;" colspan="3">0.315</td> </tr> </table> E.g. logit estimate \\(\hat\beta\\) = 3.02, means being Republican - ...increases the log-odds of voting for war by 3.02 - ...increases the odds by \\(\exp(3.02) = 20.47\\) (or 20.49) - ...increases the odds by \\(100[\exp(3.02)-1] = 1947\\)% What you _cannot_ say: Republicans 1947% more likely to vote for war: that’s a probability statement (risk) not odds. ] ??? Probability still needs to be between 0 and 1, remember. The `optiRum` package has some neat functions for translating between logit coefficients, odds, and probabilities. --- ### Oddities with ORs Mood (2010) argues that, because log-odds and ORs also reflect unobserved heterogeneity in the DV (even if unrelated to IVs), problematic to interpret them substantively, or compare them across model specifications (different IVs), across samples, across groups within samples, and across time... -- Mood (2010) suggests: - Pick a continuous instead of a binary DV (not always possible though) - Use predicted probabilities, marginal effects, and average marginal effects (AMEs) as quantities of interest - Use LPM if non-linearity not a real concern in your model (OLS estimates easier to interpret and compare) -- Other scholars (e.g. Buis 2017) argue that issues of unobserved heterogeneity not as problematic if we think of the DV not in terms of a latent variable model (as Mood 2010 does), but as a chance of an event taking place or not. Take-Away: Whether ORs could be problematic or not depends on the theory and the type of research question the researcher has. --- .pull-left[ ## Predicted Probabilities What we really care about, in most cases, is the effect of changes in `\(X\)` on the probability of the actual event of interest. To get this is a bit more involved than with OLS though, since effect of covariates is linear only with respect to the log-odds, not `\(Y\)` itself. Because model nonlinear, real net effect of a change in `\(X\)` depends on the constant, other `\(X\)`s and their parameter estimates. Unlike in a linear regression model, the first derivative of our (binary) logit function depends on `\(X\)` and \\(\hat\beta\\): $$ \frac{\partial\Pr(\hat{Y}_i = 1)}{\partial X_k} \equiv \lambda(X) = \frac{\exp(X_i\hat\beta)}{[1 + \exp(X_i\hat\beta)]^2}\hat\beta_k $$ ] .pull-right[ Practically, this means that if you’re interested in the effect of a one-unit change in `\(X\)` on `\(Pr(Y_i = 1)\)`, how much change there is depends critically on “where you are on the curve”. <img src="STAT_L5_Binary_files/figure-html/predprobplot-1.png" width="504" /> This means we need to calculate predicted probabilities of `\(Y\)` at specific values of key predictors. ] --- ### Using predict() Fortunately, we can simply pass data on our independent variables, either in-sample, out-of-sample, or totally-made-up, to `predict()` and see what probability of a 1 would be predicted by the model: ```r predict(fullmodel, type="response")[1:4] ``` ``` ## 1 2 3 4 ## 0.9850607 0.9850607 0.9968734 0.9968734 ``` ```r (test <- data.frame(Republican = as.factor(c("Democrat","Democrat","Republican","Republican")), VotedBush = seq(20,80, by = 20))) ``` ``` ## Republican VotedBush ## 1 Democrat 20 ## 2 Democrat 40 ## 3 Republican 60 ## 4 Republican 80 ``` ```r predict(fullmodel, newdata=test, type="response") ``` ``` ## 1 2 3 4 ## 0.03997294 0.28609572 0.98749105 0.99868560 ``` --- ## Marginal Effects .pull-left[ Marginal effects (ME) are popular in some disciplines (e.g. Economics) because they offer some of the same advantages that the Linear Probability Model (LPM) does: a good, single number approximation to the amount of change in \\(\Pr(Y=1)\\) produced by a 1-unit change in `\(X\)`. These are marginal effects, because they show how \\(\Pr(Y=1)\\) changes after controlling for all other variables in the model. However, some argue that marginal effects for categorical independent variables are easier to understand and also more useful than marginal effects for continuous variables. Plotting marginal effects is made super easy by the `{sjPlot}` package in R. See [here](https://strengejacke.github.io/sjPlot/articles/plot_marginal_effects.html). ] .pull-right[ ```r plot_model(fullmodel, type = "pred", terms = c("VotedBush","Republican"), colors = c("blue","red")) + theme_minimal() ``` <img src="STAT_L5_Binary_files/figure-html/plotmargins-1.png" width="504" /> ] --- ### Average Marginal Effects One can also calculate AMEs (average marginal effects), which represent the average differences in values associated with a unit change in the specified covariate. ```r library(margins) summary(margins(fullmodel)) ``` ``` ## factor AME SE z p lower upper ## RepublicanRepublican 0.3170 0.0724 4.3768 0.0000 0.1751 0.4590 ## VotedBush 0.0134 0.0043 3.0950 0.0020 0.0049 0.0219 ``` This suggests that being a Republican senator increases the probability of voting for war by 32 percentage points _on average_. Each percentage point increase in the proportion that had voted for Bush over Gore in the previous presidential election increases the probability of voting for war by ~1 percentage point. --- ## Summary So, logit interpretation harder than OLS, BUT ... - Not that much harder (actually just “more involved”) - Different approaches to support interpretation -- For now, remember three key points: 1. Nearly all of these approaches require one to be cognizant of “where we are on the curve”. 2. When it comes to interpretation, a picture really is often more valuable than text or tables, and can help guard against misinterpretation. 3. With very rare exceptions, never a good idea to present quantities of interest without their associated measures of uncertainty. --- class: center, middle # Evaluation .pull-1[.circleoff[]] .pull-1[.circleoff[]] .pull-1[.circleon[]] ??? Great, we’re all done, right? Not just yet. Some critical questions that still remain: - Is the model any good? - How well does the model fit the data? - Are the predictions accurate? - Which predictors are most important? We’ll talk about three general ways of conveying this information: - Pseudo-R2 - Proportional reduction in error (PRE) - ROC curves. We must now examine the model to understand how well it fits the data and generalizes to other observations. The evaluation process involves the assessment of three distinct areas of model utility: goodness of fit, tests of individual predictors, and validation of predicted values. While the following content isn’t exhaustive, it should provide a compact ‘cheat sheet’ and guide for the modeling process. --- ### Pseudo R-squared One place to start would be to ask whether we can just use the \\(R^2\\). However, there is no \\(R^2\\) statistic that explains the proportion of variance in the dependent variable that is explained by the predictors like with OLS. Number of so-called pseudo-\\(R^2\\) metrics based on comparing log-likelihoods though, e.g. McFadden’s \\(R^2\\): - defined as \\(1 - \frac{\log L_F}{\log L_0}\\) where \\(\log(L_F)\\) is the log likelihood value for the fitted model and \\(\log(L_0)\\) is the log likelihood for the null model with only an intercept as a predictor. - ranges from 0 to just under 1, with values closer to zero indicating that the model has no predictive power. .pull-left-1[ ```r pscl::pR2(fullmodel)["McFadden"] ``` ``` ## fitting null model for pseudo-r2 ``` ``` ## McFadden ## 0.3335115 ``` ] .pull-right-2[ Not always all that useful though (Maddala 1983): - Odd statistical propertise: Even for a “perfect fit” model, the pseudo-\\(R^2\\) will be less than 1.0, and sometimes, a lot less... - Rarely offers intuitive substantive interpretation and doesn’t really tell us anything that we can’t also get from the global LR test. Generally, I don’t advise using pseudo-\\(R^2\\)s, see more discussion [here](https://statisticalhorizons.com/r2logistic). ] --- ### Akaike’s information criterion (AIC) Better to report the log-likelihood and use AIC and BIC to compare models (as discussed last week). ```r partyonly <- glm(War ~ Republican, data=iraqVote, family=binomial(link="logit")) tab_model(partyonly, fullmodel, show.loglik = T, show.aic = T, show.r2 = F) ``` <table style="border-collapse:collapse; border:none;"> <tr> <th style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; text-align:left; "> </th> <th colspan="3" style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; ">War</th> <th colspan="3" style="border-top: double; text-align:center; font-style:normal; font-weight:bold; padding:0.2cm; ">War</th> </tr> <tr> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; text-align:left; ">Predictors</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">Odds Ratios</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">CI</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">p</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">Odds Ratios</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; ">CI</td> <td style=" text-align:center; border-bottom:1px solid; font-style:italic; font-weight:normal; col7">p</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">(Intercept)</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">1.32</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.76 – 2.32</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.329</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.00</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">0.00 – 0.28</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; col7"><strong>0.017</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">Republican [Republican]</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">36.41</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">7.06 – 669.38</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "><strong>0.001</strong></td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">20.47</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">3.69 – 384.20</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; col7"><strong>0.005</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; ">VotedBush</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "></td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "></td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; "></td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">1.12</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; ">1.03 – 1.23</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:center; col7"><strong>0.012</strong></td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm; border-top:1px solid;">Observations</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left; border-top:1px solid;" colspan="3">100</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left; border-top:1px solid;" colspan="3">100</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm;">AIC</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left;" colspan="3">83.500</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left;" colspan="3">77.884</td> </tr> <tr> <td style=" padding:0.2cm; text-align:left; vertical-align:top; text-align:left; padding-top:0.1cm; padding-bottom:0.1cm;">log-Likelihood</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left;" colspan="3">-39.750</td> <td style=" padding:0.2cm; text-align:left; vertical-align:top; padding-top:0.1cm; padding-bottom:0.1cm; text-align:left;" colspan="3">-35.942</td> </tr> </table> This is good, but it doesn't tell us whether the lower LogL/AIC in the full model (which is better) is _that_ much better. --- .pull-left[ ### Likelihood Ratio Test We introduced the likelihood ratio test last week. Removing predictor variables from a model will almost always make the model fit less well. The LRT compares the likelihood scores of the two models to determine if the difference between the two is statistically significant. We can thus test whether the predictors are _jointly significant_. `\(H_0\)` is that the reduced/restricted/smaller model is true, and `\(H_a\)` is that complete/general/larger model is true. A `\(p\)`-value for the overall model fit statistic less than 0.05 would compel us to reject the null hypothesis. It would provide evidence against the reduced model in favor of the current model. ...`fullmodel` fits the data significantly better! ] .pull-right[ ```r anova(partyonly, fullmodel, test ="Chisq") ``` ``` ## Analysis of Deviance Table ## ## Model 1: War ~ Republican ## Model 2: War ~ VotedBush + Republican ## Resid. Df Resid. Dev Df Deviance Pr(>Chi) ## 1 98 79.500 ## 2 97 71.884 1 7.616 0.005785 ** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ``` ```r lmtest::lrtest(partyonly, fullmodel) ``` ``` ## Likelihood ratio test ## ## Model 1: War ~ Republican ## Model 2: War ~ VotedBush + Republican ## #Df LogLik Df Chisq Pr(>Chisq) ## 1 2 -39.750 ## 2 3 -35.942 1 7.616 0.005785 ** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ``` ] ??? The likelihood ratio test can be performed in R using the lrtest() function from the lmtest package or using the anova() function in base. --- .pull-left-1[  ] ## Classification Evaluation Since logistic regression is a classificatory exercise, we can also consider the .red[accuracy] of the model in terms of - .red[sensitivity] (quantifying the avoidance of false negatives, or probability of detection) and - .red[specificity] (the same for false positives, or probability of false alarm). Classificatory tests (e.g. airport security, COVID) trade off between these two. .pull-center[ | True condition positive | True condition negative --------|---------|--------- Predicted condition positive | True positive (TP) | False positive (Type I error) | Precision `\(= \frac{TP}{TP + FP}\)` Predicted condition negative | False negative (Type II error) | True negative (TN) | NPV `\(= \frac{TN}{TN + FN}\)` | Sensitivity/Recall `\(= \frac{TP}{TP + FN}\)` | Specificity `\(= \frac{TN}{TN + FP}\)` ] --- .pull-left[ ### ROC Curves .red[Receiver operating characteristic] (ROC) curve plots classifier performance, visualising [trade-off](https://www.r-bloggers.com/roc-curves/) between TPR (Sensitivity) and TNR (1-Specificity). Basically, ROC traces %TPR as prediction cutoff 1->0. <!-- As cutoff lowered, model should mark more of actual 1’s as 1 and fewer actual 0’s as 1’s. --> I.e. curve should rise steeply, indicating that TPR (y-axis) rises faster than FPR (x-axis) as cutoff decreases. Following the ROC's [nice probabilistic interpretation](https://www.r-bloggers.com/probabilistic-interpretation-of-auc/), we can measure the .red[Area under ROC] curve (AUROC or AUC) to summarise overall classifier performance. AUC ranges from 0.50 to 1.00, with higher better: - 0.90-1.00 = Excellent (A) - 0.80-0.90 = Good (B) - 0.70-0.80 = Fair (C) - 0.60-0.70 = Poor (D) - 0.50-0.60 = Total Failure (F) ] .pull-right[ .panelset[ .panel[ .panel-name[Code] ```r library(plotROC) test <- data.frame(resp = c(iraqVote$War), PartyOnly = predict(partyonly, iraqVote, type="response"), FullModel = predict(fullmodel, iraqVote, type="response")) test <- melt_roc(test, "resp", c("PartyOnly","FullModel")) out <- ggplot(test, aes(d = D, m = M, colour = name)) + geom_roc(n.cuts = 0) + style_roc(theme = theme_minimal) out + annotate("text", x = .75, y = .25, label = paste(paste(unique(test$name)[2:1], "AUC =", round(calc_auc( out)$AUC, 2)), collapse = "\n")) ``` ] .panel[ .panel-name[Plot]  By these criteria, full voting model predicts well, but model including only party misses information necessary to better distinguish positive and negative cases. ] ] ] ??? Another option for plotting ROC curves is the [`MLeval` package](https://www.r-bloggers.com/how-to-easily-make-a-roc-curve-in-r/). ROC curves were invented during WWII to help radar operators decide whether the signal they were getting indicated the presence of an enemy aircraft or was just noise. --- #### Unrolling the ROC .panelset[ .panel[ .panel-name[Full Model] .pull-left[ <img src="STAT_L5_Binary_files/figure-html/rocfull-1.png" width="504" /> ] .pull-right[ <img src="STAT_L5_Binary_files/figure-html/rollfull-1.png" width="504" /> ] ] .panel[ .panel-name[Perfect Model] .pull-left[ <img src="STAT_L5_Binary_files/figure-html/rocideal-1.png" width="504" /> ] .pull-right[ <img src="STAT_L5_Binary_files/figure-html/rollideal-1.png" width="504" /> ] ] .panel[ .panel-name[Random Model] .pull-left[ <img src="STAT_L5_Binary_files/figure-html/rocrando-1.png" width="504" /> ] .pull-right[ <img src="STAT_L5_Binary_files/figure-html/rollrando-1.png" width="504" /> ] ] ] ??? The ROC is quite informative once trained, but can be rather confusing for beginners. What does the ROC tell us? Why is a bigger AUC better? What does it all [_mean_](https://www.r-bloggers.com/2020/08/unrolling-the-roc/)? --- .pull-left-1[ ## PR Curves  ] We might also be interested in the .red[relevance] of the model through the concepts of .red[precision] (how useful positives are) and .red[recall] (how complete the results are). .red[Precision recall] (PR) curves are useful for model evaluation when: - there is an extreme imbalance in the data, and - analyst is interested particuarly in one class E.g. credit card fraud, where fraud extremely rare compared with non fraud. PR curves are sensitive to: - which class is defined as positive, unlike the ROC curve which is a more balanced method. - class distribution, so if the ratio of positives to negatives changes across different analyses, then the PR curve cannot be used to compare performance between them. - false positives (because they use precision instead of specificity in combination with recall/sensitivity), which is very helpful when negatives >> positives. So if many more negatives than positives, use PR curves instead. Unfortunately, the area under the PR curve does not have a probabilistic interpretation like ROC. --- background-image: url(https://i2.wp.com/modelplot.github.io/img/cartoonrocplot.jpg?w=456&ssl=1) background-size: contain --- ## Wald Test We learned last week that we can use the Wald test to evaluate the statistical significance of each coefficient in the model. It is calculated by taking the ratio of the square of the regression coefficient to the square of the standard error of the coefficient. Idea is to test the hypothesis that the coefficient of an feature in the model is significantly different from zero. If test fails to reject null hypothesis, removing the variable from the model should not substantially harm the fit of that model. ```r library(survey) regTermTest(fullmodel, "VotedBush") ``` ``` ## Wald test for VotedBush ## in glm(formula = War ~ VotedBush + Republican, family = binomial(link = "logit"), ## data = iraqVote) ## F = 6.308672 on 1 and 97 df: p= 0.013666 ``` ```r regTermTest(fullmodel, "Republican") ``` ``` ## Wald test for Republican ## in glm(formula = War ~ VotedBush + Republican, family = binomial(link = "logit"), ## data = iraqVote) ## F = 7.939307 on 1 and 97 df: p= 0.0058624 ``` ??? Since p<0.05 for both, it looks like both of these variables are important and belong in the full model! --- # Some Final Words Note that this lecture hardly exhausts the possible approaches for estimating and interpreting binary response models: - other methods of model evaluation and (cross-)validation, as well as identifying variable importance - residual analysis, including influence statistics, outlier detection, etc. - problems of identification -- Lastly, you might be asking “Why should we think that anything is ever distributed according to a standard logistic distribution?” The point is not that we are saying that a set of probabilities _are_ logistically distributed, but it is useful to consider errors for log-odds to be logistically distributed to model binary outcomes. -- Can you think of something that should be logistically distributed? -- [_Coronavirus_](https://flowingdata.com/2020/03/10/visual-explanation-of-exponential-growth-and-epidemics/) can't grow exponentially forever... --- class: center, middle # Summary .pull-1[.circleon[]] .pull-1[.circleon[]] .pull-1[.circleon[]]